On Intelligence

Judgment, Agency, and Structural Limits at the AI Frontier

By Wim Vanraes

This article is Part I of a three-part series.

It introduces a definition of intelligence, develops the core framework used throughout the series, and formulates a key law that constrains what intelligence can and cannot be.

Part II applies this framework to a real-world AI safety case.

Part III examines the deeper question of meaning, normativity, and judgment, and what AI systems structurally cannot supply.

This article will explore intelligence and its necessary adjacent concepts. It is a structural primer written to clarify what intelligence, judgment, and agency presuppose, and therefore what current AI systems can and cannot do independent of scale, fluency, or performance metrics.

It is not a policy proposal and does not argue what systems should be permitted to do. Its purpose is to make the boundary conditions explicit, so responsibility and evaluation do not drift where verification breaks down. This framework will be expanded and applied in my forthcoming work.

Why is this important?

Much of today’s work is framed as frontier AI development. It’s a well-chosen image that hearkens back to the settlers in the Old West: no rules, no maps, no solid understanding of the environment and its actors. The Law exists, but is constantly playing catch-up. Fortunes are made quickly, and lost even faster. Only those who really understand the lay of the land survive.

In AI, this frontier condition expresses itself as a lack of definitional clarity: not only about what systems can do, but about what intelligence itself even is, what judgment requires, and which constraints are structurally non-negotiable. Without that clarity, progress appears rapid while foundations quietly erode. People will (and do) build whole settlements in the wrong places, only to be left as ghost towns the moment it became clear that the mines dug so enthusiastically were dead-ends.

Avoiding the dangers and pitfalls of this frontier condition demands a particular mode of thinking: one that balances exploration and risk-taking with safety, durability, and long-term responsibility. No one builds an empire by being a dayfly.

The framework I develop here, and the Law I propose (ArnGrimR’s Law), are aimed at giving solid footing and structure at precisely this point of instability. It is deliberately concise. It avoids my usual elaborations. Those deeper explanations will come later.

What it does offer, is a clear, structural roadmap : definitions, constraints, laws, predictions, and applications. Enough for a capable frontiersman not merely to survive, but to build something that lasts.

Before we start, however, the following is important:

A note on “obviousness”

Much of what follows may feel obvious. That is not an accident.

Nothing is obvious until it is pointed out.

Human understanding does not reliably surface the obvious on its own. Instead, it usually requires external alignment: a distinction named, a boundary restored, a relation made explicit. What feels like common sense afterward is often what was missing before.

If parts of this essay strike you as “nothing new,” read that as a signal: not that the distinctions were unnecessary, but that they are now quietly doing their work.

Now it is visible:

“This isn’t new.”

→ Correct. Seeing it is.“This should have been obvious.”

→ Exactly. That’s the problem.

On intelligence

A quick guide and primer

(NOTE: This framework does not argue, by implication, what systems should be allowed to do, but clarifies what they can and cannot do structurally.)

Executive Abstract

This document introduces a structural framework for understanding intelligence, judgment, and agency, clarifying what AI systems can and cannot do independent of performance, scale, or fluency. Intelligence is defined as the autonomous capacity to reason insightfully with minimal data across contexts, and is shown to presuppose a stack that includes self-awareness, responsibility, judgment, and agency—capacities current AI systems do not possess. The framework distinguishes simulated agency from genuine judgment, explaining why increasingly fluent systems generate decision-shaped outputs without the ability to own or stand behind them. It introduces a new law—one cannot meaningfully evaluate what one could not, in principle, produce oneself—to show why evaluation inflation, trust erosion, and responsibility diffusion emerge as structural consequences of AI adoption. The conclusion is not that AI is the problem, but that responsibility cannot be delegated to tools: AI is an instrument, not a bearer of judgment, and its proper use requires human agents to retain ownership of decisions, accountability, and conceptual integrity.

Definition:

“Intelligence is the autonomous, efficient capacity to reason abstractly and insightfully with minimal data, generating novel, principle-based solutions across diverse contexts, regardless of substrate.”

That is the starting point.

In order to think clearly about how this is applied in human thinking, we need to expand the scope to several other adjacent terms, and build the pathwork followed.

This brings us to the following:

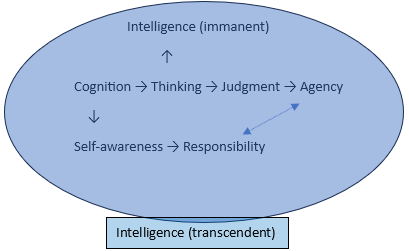

“Cognition generates representations; thinking orients them toward ends; self-awareness grounds responsibility; judgment commits under uncertainty; agency enacts those commitments through self-aware action; intelligence governs how efficiently and insightfully this entire process unfolds across contexts with minimal data.”

Whereby:

* Cognition presupposes nothing

* Thinking presupposes cognition

* Self-awareness presupposes cognition (and possibly thinking, minimally)

* Responsibility presupposes self-awareness

* Judgment presupposes responsibility

* Agency presupposes judgment + self-awareness

* Intelligence governs how efficiently and insightfully an agent does all of the above across contexts, under uncertainty, with minimal data

Which can be represented as follows:

Intelligence (immanent) sits at the top of the chain - it is the sophisticated thinking that emerges from and operates on the whole process. It’s the highest achievement of the system.

Intelligence (transcendent) is the field itself - the governing quality that suffuses and evaluates every operation. It’s the “how well” that applies to cognition, thinking, judgment, and agency simultaneously.

On Agency

We can discern a spectrum:

· Basic agency (simple animals) - minimal self-awareness, minimal thinking, limited context-adaptation

· Non-intelligent agency (complex animals) - clear self-awareness, sophisticated thinking, but limited ability to operate efficiently across novel contexts with minimal data, or abstract

· Intelligent agency (humans at their best) - full stack operating with efficiency and insight

As a category error:

· Simulated agency (current AI systems) - sophisticated pattern-matching mimicry that generates agency-like outputs without the presuppositional stack (justification and fluency mask lack of judgement and self-awareness: there is no self to take ownership)

Where we keep in mind that intelligence isn’t just cognitive horsepower, nor just processing speed or data capacity: it’s the integration of the full stack under autonomous, responsible control.

This understanding and framework now allows predictions, which in turn lead to clear consequences that can be mapped.

For example:

Prediction: As AI systems become more fluent and less error-prone, organizations will increasingly invoke “judgment” and “decision-making” rhetorically — while being unable to evaluate, assign, or take responsibility for those judgments.

Consequences: Hallucinations become rarer but harder to detect; fluency substitutes for understanding; evaluation scores improve while trust erodes; and responsibility diffuses instead of concentrating.

This is not a tooling or training problem. It is a structural consequence of systems that generate justifications without possessing judgment. As capability increases, the gap between what systems can do and what anyone can responsibly stand behind widens rather than narrows.

Or it leads to recalibration of existing processes:

If intelligence requires autonomous reasoning with minimal data, then effective education should:

*Focus on teaching principles, not procedures

*Minimize scaffolding over time (wean from external guidance)

*Test with novel scenarios (not just recall or familiar application)

*Prioritize generative tasks (create solutions, not recognize them)

A very important element to keep in mind is the following law:

Arngrimr’s Law:

“One cannot meaningfully check that which one was not capable to produce by themselves in the first place.”

Corollary:

“As AI capability exceeds human ability to verify outputs, verification inevitably shifts from quality assessment to epistemic trust management, accelerating responsibility diffusion: a shift that feels like progress until accountability is externally demanded.”

And the following axioms:

Capability distinctions:

* Outcome =/= Intelligence

* Knowledge =/= Intelligence

* IQ =/= Intelligence

Process distinctions:

* Fluency =/= Insight

* Patterns =/= Principles

* Recall =/= Understanding

Behavioral distinctions:

* Mimicry =/= Mastery

* Iteration =/= Transcendence

* Autonomy =/= Unsupervised Computation

The following vital observations, in line with all this, need to be made:

Because AI systems are trained on human outputs without the presuppositional stack that produced them, they don’t just mimic intelligence—they mimic the surface expressions of bias, emotion, and taboo without the underlying commitments that would make those responses meaningful or consistent.

This is not a criticism of AI, because systems that lack self-awareness and judgment cannot meaningfully bear responsibility: after all, AI only mimics and exposes HUMAN thinking and features. Anything that sounds like a fault or mistake is fundamentally a HUMAN error or mistake, but hyper-focused through the sheer computing power and scale of AI, and the complete lack of self-awareness. Where humans would be slow to admit mistakes, for example, out of fear or pride or any other human reason not to accept a correction, AI will simply admit, and change their mind in an immediate 180. This focuses attention much more cleanly on the process, and not on the messiness that human interactions bring with them.

This is the spine of a way of looking at intelligence that makes explicit how judgment and action actually operate. Once those foundations are properly defined and outlined, many downstream questions become easier to situate and reason about.

This primer is intentionally concise. It establishes structure, not elaboration.

The broader implications and applications, along with a step-by-step development of the key definitions and the reasoning behind them, will be presented in my forthcoming book.

So, before you move on: what assumptions about intelligence or judgment did this primer make visible that you hadn’t noticed before?

If you liked this, please share!

© Wim Vanraes, 2026.

All rights reserved. U.S. copyright registration pending.

Upon reading for a second time, I was struck by the recognition that intelligence and knowledge of subject matter are required to assess accuracy and take responsibility for outcomes. That said, I hope you will be addressing AI framework decisions made by lawmakers with little or no knowledge of AI itself. Thanks!!

Statement is on point; “AI lacks accountability for errors”.

Humans should not “blindly accept” a response from AI, as a response is only as accurate as the original information provided by humans.